Multi-Cluster (Cluster Mesh)

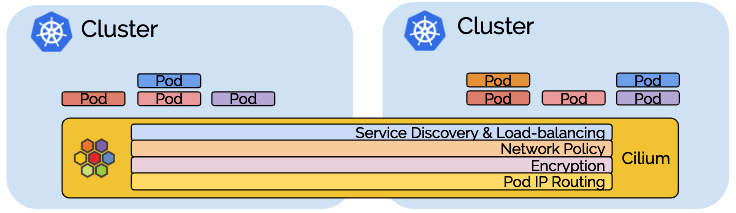

Cluster mesh extends the networking datapath across multiple clusters. It allows endpoints in all connected clusters to communicate while providing full policy enforcement. Load-balancing is available via Kubernetes annotations.

Setting up Cluster Mesh A Cilium MultiCluster setup involves deploying Cilium, a powerful networking and security solution for Kubernetes, across multiple Kubernetes clusters. This setup enables communication and workload deployment across clusters, providing benefits such as fault tolerance, disaster recovery, and improved performance. Here's an explanation of various aspects of Cilium MultiCluster

Architecture:

In a MultiCluster architecture with Cilium, you have multiple Kubernetes clusters deployed across different geographic regions, data centers, or cloud providers.

Each Kubernetes cluster runs Cilium as its CNI (Container Network Interface) plugin to manage networking, security, and observability for containerized workloads.

Connectivity:

Cilium facilitates connectivity between pods and services deployed across different Kubernetes clusters.

It achieves this through mechanisms like cluster federation, network peering, or VPN tunnels, depending on the chosen MultiCluster setup.

Service Discovery:

Cilium provides service discovery capabilities across MultiCluster environments, allowing pods in one cluster to discover and communicate with services deployed in other clusters.

This enables seamless communication between microservices distributed across multiple clusters.

Network Policies:

Cilium allows the enforcement of network policies across MultiCluster deployments, ensuring that traffic between clusters adheres to security and compliance requirements.

Network policies define rules for traffic filtering, segmentation, and access control based on various criteria such as IP addresses, ports, and labels.

Identity-Aware Networking:

Cilium supports identity-aware networking across MultiCluster environments, allowing granular control over communication based on workload identities.

Workload identities, such as Kubernetes service accounts or custom attributes, can be used to enforce fine-grained access control policies between clusters.

Observability:

Cilium provides comprehensive observability features for MultiCluster environments, including real-time network visibility, metrics collection, and distributed tracing.

Operators can monitor network traffic, performance metrics, and security events across clusters to ensure operational efficiency and security.

Security:

Cilium enhances security in MultiCluster environments by enforcing security policies, encrypting inter-cluster communication, and providing threat detection capabilities.

It protects against network-based attacks, ensures data confidentiality, and enables secure communication between clusters.

Scalability and Performance:

Cilium is designed for scalability and performance in MultiCluster deployments, leveraging eBPF (extended Berkeley Packet Filter) technology for efficient packet processing and low-latency networking.

It supports large-scale deployments spanning multiple clusters while maintaining high throughput and low latency for inter-cluster communication.

Cilium MultiCluster offers a robust networking and security solution for Kubernetes environments spanning multiple clusters. It enables seamless communication, service discovery, and security enforcement across clusters, empowering organizations to build resilient, scalable, and secure distributed applications.

Setting up Cluster Mesh This is a step-by-step guide on how to build a mesh of Kubernetes clusters by connecting them together, enable pod-to-pod connectivity across all clusters, define global services to load-balance between clusters.

Prerequisites

2 Kubernetes Clusters

Cluster-A

root@master:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master.arobyte.tech Ready control-plane 58m v1.26.0

worker1.arobyte.tech NotReady <none> 41m v1.26.0

worker2.arobyte.tech NotReady <none> 40m v1.26.0

Cluster-B

root@devmaster:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

devmaster.arobyte.tech Ready control-plane 3h57m v1.26.0

devworker1.arobyte.tech NotReady <none> 39m v1.26.0

devworker2.arobyte.tech NotReady <none> 38m v1.26.0

Let's Install Cilium on Both The Cluster

Cluster-A

root@master:~# kubectl config use-context master

root@master:~# helm repo add cilium https://helm.cilium.io/

helm install cilium cilium/cilium --version 1.15.1 \

--namespace kube-system \

--set nodeinit.enabled=true \

--set kubeProxyReplacement=partial \

--set hostServices.enabled=false \

--set externalIPs.enabled=true \

--set nodePort.enabled=true \

--set hostPort.enabled=true \

--set cluster.name=master \

--set cluster.id=1

root@master:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master.arobyte.tech Ready control-plane 58m v1.26.0

worker1.arobyte.tech Ready <none> 41m v1.26.0

worker2.arobyte.tech Ready <none> 40m v1.26.0

Cluster-B

root@master:~# kubectl config use-context devmaster

root@master:~# helm install cilium cilium/cilium --version 1.15.1 \

--namespace kube-system \

--set nodeinit.enabled=true \

--set kubeProxyReplacement=partial \

--set hostServices.enabled=false \

--set externalIPs.enabled=true \

--set nodePort.enabled=true \

--set hostPort.enabled=true \

--set cluster.name=devmaster \

--set cluster.id=2

root@master:~# kubectl get pods -n metallb-system

NAME READY STATUS RESTARTS AGE

metallb-controller-9fcb75cf5-8s5w4 1/1 Running 0 35m

metallb-speaker-v769s 4/4 Running 0 35m

metallb-speaker-vfg62 4/4 Running 0 35m

metallb-speaker-www9n 4/4 Running 0 35m

Metallb Load Balancer Must be installed on Both the Cluster For Load balancer.

Cluster-A

root@master:~# kubectl get pods -n metallb-system

NAME READY STATUS RESTARTS AGE

metallb-controller-9fcb75cf5-mds7w 1/1 Running 0 30m

metallb-speaker-cfsw4 4/4 Running 0 30m

metallb-speaker-nblvz 4/4 Running 0 30m

metallb-speaker-sr68w 4/4 Running 0 30m

Cluster-B

root@devmaster:~# kubectl get pods -n metallb-system

NAME READY STATUS RESTARTS AGE

metallb-controller-9fcb75cf5-8s5w4 1/1 Running 0 35m

metallb-speaker-v769s 4/4 Running 0 35m

metallb-speaker-vfg62 4/4 Running 0 35m

metallb-speaker-www9n 4/4 Running 0 35m

Now We can see on Cluster-A and Cluster-B all the nodes are in Ready state after installing Cilium CNI

Enable Cilium Multicluster on Both Clusters

root@master:~# cilium clustermesh enable --context master --service-type LoadBalancer

root@master:~# cilium clustermesh status --context master --wait

✅ Service "clustermesh-apiserver" of type "LoadBalancer" found

✅ Cluster access information is available:

- 172.16.16.150:2379

⌛ Waiting (0s) for deployment clustermesh-apiserver to become ready: only 0 of 1 replicas are available

root@master:~# cilium clustermesh enable --context devmaster --service-type LoadBalancer

root@master:~# cilium clustermesh status --context master --wait

✅ Service "clustermesh-apiserver" of type "LoadBalancer" found

✅ Cluster access information is available:

- 172.16.16.150:2379

✅ Deployment clustermesh-apiserver is ready

🔌 No cluster connected

🔀 Global services: [ min:0 / avg:0.0 / max:0 ]

root@master:~# cilium clustermesh status --context devmaster --wait

✅ Service "clustermesh-apiserver" of type "LoadBalancer" found

✅ Cluster access information is available:

- 172.17.17.150:2379

✅ Deployment clustermesh-apiserver is ready

🔌 No cluster connected

🔀 Global services: [ min:0 / avg:0.0 / max:0 ]

We can see cluster mesh installed Successfully on both Clusters

Lets connect both clusters together

root@master:~# cilium clustermesh connect --context master \

--destination-context devmaster

✅ Detected Helm release with Cilium version 1.15.1

✨ Extracting access information of cluster devmaster...

🔑 Extracting secrets from cluster devmaster...

ℹ️ Found ClusterMesh service IPs: [172.17.17.150]

✨ Extracting access information of cluster master...

🔑 Extracting secrets from cluster master...

ℹ️ Found ClusterMesh service IPs: [172.16.16.150]

⚠️ Cilium CA certificates do not match between clusters. Multicluster features will be limited!

ℹ️ Configuring Cilium in cluster 'master' to connect to cluster 'devmaster'

ℹ️ Configuring Cilium in cluster 'devmaster' to connect to cluster 'master'

✅ Connected cluster master and devmaster!

Let’s verify the status of the Cilium cluster mesh once again

root@master:~# cilium clustermesh status --context master --wait

✅ Service "clustermesh-apiserver" of type "LoadBalancer" found

✅ Cluster access information is available:

- 172.16.16.150:2379

✅ Deployment clustermesh-apiserver is ready

✅ All 3 nodes are connected to all clusters [min:1 / avg:1.0 / max:1]

🔌 Cluster Connections:

- devmaster: 3/3 configured, 3/3 connected

🔀 Global services: [ min:0 / avg:0.0 / max:0 ]

root@master:~# cilium clustermesh status --context devmaster --wait

✅ Service "clustermesh-apiserver" of type "LoadBalancer" found

✅ Cluster access information is available:

- 172.17.17.150:2379

✅ Deployment clustermesh-apiserver is ready

✅ All 3 nodes are connected to all clusters [min:1 / avg:1.0 / max:1]

🔌 Cluster Connections:

- master: 3/3 configured, 3/3 connected

🔀 Global services: [ min:0 / avg:0.0 / max:0 ]

Verify the Kubernetes Service with Cilium Mesh Control Plane

root@master:~# kubectl config use-context devmaster

root@master:~# kubectl get svc -A | grep clustermesh

kube-system clustermesh-apiserver LoadBalancer 10.98.126.70 172.17.17.150 2379:32200/TCP 34m

kube-system clustermesh-apiserver-metrics ClusterIP None <none> 9962/TCP,9963/TCP 34m

root@master:~# kubectl config use-context master

Switched to context "master".

root@master:~# kubectl get svc -A | grep clustermesh

kube-system clustermesh-apiserver LoadBalancer 10.105.111.27 172.16.16.150 2379:31285/TCP 37m

kube-system clustermesh-apiserver-metrics ClusterIP None <none> 9962/TCP,9963/TCP 37m

Validate the connectivity by running the connectivity test in multi-cluster mode

root@master:~# cilium connectivity test --context master --multi-cluster devmaster

ℹ️ Monitor aggregation detected, will skip some flow validation steps

✨ [master] Creating namespace cilium-test for connectivity check...

✨ [devmaster] Creating namespace cilium-test for connectivity check...

✨ [master] Deploying echo-same-node service...

✨ [master] Deploying echo-other-node service...

✨ [master] Deploying DNS test server configmap...

✨ [devmaster] Deploying DNS test server configmap...

✨ [master] Deploying same-node deployment...

✨ [master] Deploying client deployment...

✨ [master] Deploying client2 deployment...

✨ [devmaster] Deploying echo-other-node service...

✨ [devmaster] Deploying other-node deployment...

✨ [host-netns] Deploying master daemonset...

✨ [host-netns] Deploying devmaster daemonset...

✨ [host-netns-non-cilium] Deploying master daemonset...

ℹ️ Skipping tests that require a node Without Cilium

⌛ [master] Waiting for deployment cilium-test/client to become ready...

⌛ [master] Waiting for deployment cilium-test/client2 to become ready...

⌛ [master] Waiting for deployment cilium-test/echo-same-node to become ready...

⌛ [devmaster] Waiting for deployment cilium-test/echo-other-node to become ready...

⌛ [master] Waiting for pod cilium-test/client-65847bf96-2mqtd to reach DNS server on cilium-test/echo-same-node-66f8958597-mbr7s pod...

⌛ [master] Waiting for pod cilium-test/client2-85585bdd-bnn77 to reach DNS server on cilium-test/echo-same-node-66f8958597-mbr7s pod...

⌛ [master] Waiting for pod cilium-test/client-65847bf96-2mqtd to reach DNS server on cilium-test/echo-other-node-5db9b7bbbc-lvsqj pod...

⌛ [master] Waiting for pod cilium-test/client2-85585bdd-bnn77 to reach DNS server on cilium-test/echo-other-node-5db9b7bbbc-lvsqj pod...

⌛ [master] Waiting for pod cilium-test/client-65847bf96-2mqtd to reach default/kubernetes service...

⌛ [master] Waiting for pod cilium-test/client2-85585bdd-bnn77 to reach default/kubernetes service...

⌛ [master] Waiting for Service cilium-test/echo-other-node to become ready...

⌛ [master] Waiting for Service cilium-test/echo-other-node to be synchronized by Cilium pod kube-system/cilium-6qfz6

⌛ [master] Waiting for Service cilium-test/echo-same-node to become ready...

⌛ [master] Waiting for Service cilium-test/echo-same-node to be synchronized by Cilium pod kube-system/cilium-6qfz6

⌛ [master] Waiting for DaemonSet cilium-test/host-netns-non-cilium to become ready...

⌛ [master] Waiting for DaemonSet cilium-test/host-netns to become ready...

⌛ [devmaster] Waiting for DaemonSet cilium-test/host-netns to become ready...

ℹ️ Skipping IPCache check

🔭 Enabling Hubble telescope...

⚠️ Unable to contact Hubble Relay, disabling Hubble telescope and flow validation: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial tcp 127.0.0.1:4245: connect: connection refused"

ℹ️ Expose Relay locally with:

cilium hubble enable

cilium hubble port-forward&

ℹ️ Cilium version: 1.15.1

🏃 Running 66 tests ...

[=] Test [no-unexpected-packet-drops] [1/66]

[-] Scenario [no-unexpected-packet-drops/no-unexpected-packet-drops]

🟥 Found unexpected packet drops:

{

"labels": {

"direction": "EGRESS",

"reason": "Unsupported L2 protocol"

},

"name": "cilium_drop_count_total",

"value": 428

}

[=] Test [no-policies] [2/66]

.....................

[-] Scenario [no-policies/pod-to-pod]

[.] Action [no-policies/pod-to-pod/curl-ipv4-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-same-node-66f8958597-mbr7s (10.0.2.109:8080)]

[.] Action [no-policies/pod-to-pod/curl-ipv4-1: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-other-node-5db9b7bbbc-lvsqj (10.0.2.163:8080)]

[.] Action [no-policies/pod-to-pod/curl-ipv4-2: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-same-node-66f8958597-mbr7s (10.0.2.109:8080)]

[.] Action [no-policies/pod-to-pod/curl-ipv4-3: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-other-node-5db9b7bbbc-lvsqj (10.0.2.163:8080)]

[-] Scenario [no-policies/pod-to-world]

[.] Action [no-policies/pod-to-world/http-to-one.one.one.one-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> one.one.one.one-http (one.one.one.one:80)]

[.] Action [no-policies/pod-to-world/https-to-one.one.one.one-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> one.one.one.one-https (one.one.one.one:443)]

[.] Action [no-policies/pod-to-world/https-to-one.one.one.one-index-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> one.one.one.one-https-index (one.one.one.one:443)]

[.] Action [no-policies/pod-to-world/http-to-one.one.one.one-1: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> one.one.one.one-http (one.one.one.one:80)]

[.] Action [no-policies/pod-to-world/https-to-one.one.one.one-1: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> one.one.one.one-https (one.one.one.one:443)]

[.] Action [no-policies/pod-to-world/https-to-one.one.one.one-index-1: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> one.one.one.one-https-index (one.one.one.one:443)]

[-] Scenario [no-policies/pod-to-cidr]

[.] Action [no-policies/pod-to-cidr/external-1111-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> external-1111 (1.1.1.1:443)]

[.] Action [no-policies/pod-to-cidr/external-1111-1: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> external-1111 (1.1.1.1:443)]

[.] Action [no-policies/pod-to-cidr/external-1001-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> external-1001 (1.0.0.1:443)]

[.] Action [no-policies/pod-to-cidr/external-1001-1: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> external-1001 (1.0.0.1:443)]

[-] Scenario [no-policies/client-to-client]

[.] Action [no-policies/client-to-client/ping-ipv4-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/client2-85585bdd-bnn77 (10.0.2.148:0)]

[.] Action [no-policies/client-to-client/ping-ipv4-1: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/client-65847bf96-2mqtd (10.0.2.95:0)]

[-] Scenario [no-policies/pod-to-service]

[.] Action [no-policies/pod-to-service/curl-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-other-node (echo-other-node.cilium-test:8080)]

[.] Action [no-policies/pod-to-service/curl-1: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-same-node (echo-same-node.cilium-test:8080)]

[.] Action [no-policies/pod-to-service/curl-2: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-same-node (echo-same-node.cilium-test:8080)]

[.] Action [no-policies/pod-to-service/curl-3: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-other-node (echo-other-node.cilium-test:8080)]

[-] Scenario [no-policies/pod-to-hostport]

[.] Action [no-policies/pod-to-hostport/curl-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-other-node-5db9b7bbbc-lvsqj (172.17.17.101:4000)]

❌ command "curl -w %{local_ip}:%{local_port} -> %{remote_ip}:%{remote_port} = %{response_code} --silent --fail --show-error --output /dev/null --connect-timeout 2 --max-time 10 http://172.17.17.101:4000" failed: error with exec request (pod=cilium-test/client-65847bf96-2mqtd, container=client): command terminated with exit code 28

ℹ️ curl output:

[.] Action [no-policies/pod-to-hostport/curl-1: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-same-node-66f8958597-mbr7s (172.16.16.102:4000)]

[.] Action [no-policies/pod-to-hostport/curl-2: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-same-node-66f8958597-mbr7s (172.16.16.102:4000)]

[.] Action [no-policies/pod-to-hostport/curl-3: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-other-node-5db9b7bbbc-lvsqj (172.17.17.101:4000)]

❌ command "curl -w %{local_ip}:%{local_port} -> %{remote_ip}:%{remote_port} = %{response_code} --silent --fail --show-error --output /dev/null --connect-timeout 2 --max-time 10 http://172.17.17.101:4000" failed: error with exec request (pod=cilium-test/client2-85585bdd-bnn77, container=client2): command terminated with exit code 28

ℹ️ curl output:

[-] Scenario [no-policies/pod-to-host]

[.] Action [no-policies/pod-to-host/ping-ipv4-internal-ip: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> 192.168.0.114 (192.168.0.114:0)]

[.] Action [no-policies/pod-to-host/ping-ipv4-internal-ip: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> 172.16.16.101 (172.16.16.101:0)]

[.] Action [no-policies/pod-to-host/ping-ipv4-internal-ip: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> 172.16.16.102 (172.16.16.102:0)]

[.] Action [no-policies/pod-to-host/ping-ipv4-internal-ip: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> 192.168.0.114 (192.168.0.114:0)]

[.] Action [no-policies/pod-to-host/ping-ipv4-internal-ip: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> 172.16.16.101 (172.16.16.101:0)]

[.] Action [no-policies/pod-to-host/ping-ipv4-internal-ip: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> 172.16.16.102 (172.16.16.102:0)]

[-] Scenario [no-policies/host-to-pod]

[.] Action [no-policies/host-to-pod/curl-ipv4-0: cilium-test/host-netns-gnqtv (172.16.16.101) -> cilium-test/echo-same-node-66f8958597-mbr7s (10.0.2.109:8080)]

[.] Action [no-policies/host-to-pod/curl-ipv4-1: cilium-test/host-netns-gnqtv (172.16.16.101) -> cilium-test/echo-other-node-5db9b7bbbc-lvsqj (10.0.2.163:8080)]

[.] Action [no-policies/host-to-pod/curl-ipv4-2: cilium-test/host-netns-rswsn (172.17.17.102) -> cilium-test/echo-same-node-66f8958597-mbr7s (10.0.2.109:8080)]

[.] Action [no-policies/host-to-pod/curl-ipv4-3: cilium-test/host-netns-rswsn (172.17.17.102) -> cilium-test/echo-other-node-5db9b7bbbc-lvsqj (10.0.2.163:8080)]

[.] Action [no-policies/host-to-pod/curl-ipv4-4: cilium-test/host-netns-xfkhb (172.17.17.101) -> cilium-test/echo-same-node-66f8958597-mbr7s (10.0.2.109:8080)]

[.] Action [no-policies/host-to-pod/curl-ipv4-5: cilium-test/host-netns-xfkhb (172.17.17.101) -> cilium-test/echo-other-node-5db9b7bbbc-lvsqj (10.0.2.163:8080)]

[.] Action [no-policies/host-to-pod/curl-ipv4-6: cilium-test/host-netns-5x8wz (172.16.16.102) -> cilium-test/echo-same-node-66f8958597-mbr7s (10.0.2.109:8080)]

[.] Action [no-policies/host-to-pod/curl-ipv4-7: cilium-test/host-netns-5x8wz (172.16.16.102) -> cilium-test/echo-other-node-5db9b7bbbc-lvsqj (10.0.2.163:8080)]

[-] Scenario [no-policies/pod-to-external-workload]

[=] Test [no-policies-extra] [3/66]

.

[-] Scenario [no-policies-extra/pod-to-remote-nodeport]

[.] Action [no-policies-extra/pod-to-remote-nodeport/curl-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-other-node (echo-other-node.cilium-test:8080)]

❌ command "curl -w %{local_ip}:%{local_port} -> %{remote_ip}:%{remote_port} = %{response_code} --silent --fail --show-error --output /dev/null --connect-timeout 2 --max-time 10 http://192.168.0.114:32184" failed: error with exec request (pod=cilium-test/client-65847bf96-2mqtd, container=client): command terminated with exit code 7

ℹ️ curl output:

[.] Action [no-policies-extra/pod-to-remote-nodeport/curl-1: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-other-node (echo-other-node.cilium-test:8080)]

[.] Action [no-policies-extra/pod-to-remote-nodeport/curl-2: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-same-node (echo-same-node.cilium-test:8080)]

[.] Action [no-policies-extra/pod-to-remote-nodeport/curl-3: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-same-node (echo-same-node.cilium-test:8080)]

[.] Action [no-policies-extra/pod-to-remote-nodeport/curl-4: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-other-node (echo-other-node.cilium-test:8080)]

❌ command "curl -w %{local_ip}:%{local_port} -> %{remote_ip}:%{remote_port} = %{response_code} --silent --fail --show-error --output /dev/null --connect-timeout 2 --max-time 10 http://192.168.0.114:32184" failed: error with exec request (pod=cilium-test/client2-85585bdd-bnn77, container=client2): command terminated with exit code 7

ℹ️ curl output:

[.] Action [no-policies-extra/pod-to-remote-nodeport/curl-5: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-other-node (echo-other-node.cilium-test:8080)]

[.] Action [no-policies-extra/pod-to-remote-nodeport/curl-6: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-same-node (echo-same-node.cilium-test:8080)]

[.] Action [no-policies-extra/pod-to-remote-nodeport/curl-7: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-same-node (echo-same-node.cilium-test:8080)]

[-] Scenario [no-policies-extra/pod-to-local-nodeport]

[.] Action [no-policies-extra/pod-to-local-nodeport/curl-0: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-other-node (echo-other-node.cilium-test:8080)]

[.] Action [no-policies-extra/pod-to-local-nodeport/curl-1: cilium-test/client-65847bf96-2mqtd (10.0.2.95) -> cilium-test/echo-same-node (echo-same-node.cilium-test:8080)]

[.] Action [no-policies-extra/pod-to-local-nodeport/curl-2: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-other-node (echo-other-node.cilium-test:8080)]

[.] Action [no-policies-extra/pod-to-local-nodeport/curl-3: cilium-test/client2-85585bdd-bnn77 (10.0.2.148) -> cilium-test/echo-same-node (echo-same-node.cilium-test:8080)]

[=] Test [allow-all-except-world] [4/66]

................

[=] Test [client-ingress] [5/66]

------------

----------------

---------------

-------------------

Deploying a Simple Example Service

In Cluster-A, deploy:

root@master:~# kubectl config use-context master

Switched to context "master"

root@master:~# kubectl apply -f https://raw.githubusercontent.com/cilium/cilium/1.15.1/examples/kubernetes/clustermesh/global-service-example/cluster1.yaml

service/rebel-base created

deployment.apps/rebel-base created

configmap/rebel-base-response created

deployment.apps/x-wing created

In Cluster-B, deploy:

root@master:~# kubectl config use-context devmaster

Switched to context "devmaster".

root@master:~# kubectl apply -f https://raw.githubusercontent.com/cilium/cilium/1.15.1/examples/kubernetes/clustermesh/global-service-example/cluster2.yaml

service/rebel-base created

deployment.apps/rebel-base created

configmap/rebel-base-response created

deployment.apps/x-wing created

Let's validate the deployment on both the clusters

Cluster-A

root@master:~# kubectl get pods

NAME READY STATUS RESTARTS AGE

rebel-base-5f575fcc5d-46sx4 1/1 Running 0 15m

rebel-base-5f575fcc5d-62nqv 1/1 Running 0 15m

x-wing-58c9c6d949-lgv7j 1/1 Running 0 15m

x-wing-58c9c6d949-vqqxv 1/1 Running 0 15m

Cluster-B

root@devmaster:~# kubectl get pods

NAME READY STATUS RESTARTS AGE

rebel-base-5f575fcc5d-8jfpl 1/1 Running 0 13m

rebel-base-5f575fcc5d-cnw8x 1/1 Running 0 13m

x-wing-58c9c6d949-5nbfh 1/1 Running 0 13m

x-wing-58c9c6d949-tbwfq 1/1 Running 0 13m

From either cluster, access the global service

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-1"}

We will see replies from pods in both clusters.

In Cluster-A, add service.cilium.io/shared="false" to existing global service

root@master:~# kubectl annotate service rebel-base service.cilium.io/shared="false" --overwrite

service/rebel-base annotated

From Cluster-A, access the global service one more time

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-1"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-2"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-2"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-1"}

We will still see replies from pods in both clusters

From Cluster-B, access the global service again

root@master:~# kubectl annotate service rebel-base service.cilium.io/shared-

service/rebel-base annotated

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-2"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-2"}

We will see replies from pods only from cluster 2, as the global service in cluster 1 is no longer shared

In Cluster-A, remove service.cilium.io/shared annotation of existing global service

root@master:~# kubectl config use-context master

Switched to context "master".

root@master:~# kubectl annotate service rebel-base service.cilium.io/shared-

service/rebel-base annotated

From either cluster, access the global service

root@master:~# kubectl config use-context master

Switched to context "master".

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-2"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-2"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-1"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-1"}

root@master:~# kubectl config use-context devmaster

Switched to context "devmaster".

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-1"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-2"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-1"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-2"}

root@master:~# kubectl exec -ti deployment/x-wing -- curl rebel-base

{"Galaxy": "Alderaan", "Cluster": "Cluster-1"}

We will see replies from pods in both clusters again